Machine Learning, Deep Learning, Neural Networks and Artificial Intelligence. What is all the fuzz about it and what does that have to do with audio or audio workflows? We will try to give a brief introduction into what all that means. To start off, here is a definition we came up with which is as short as possible:

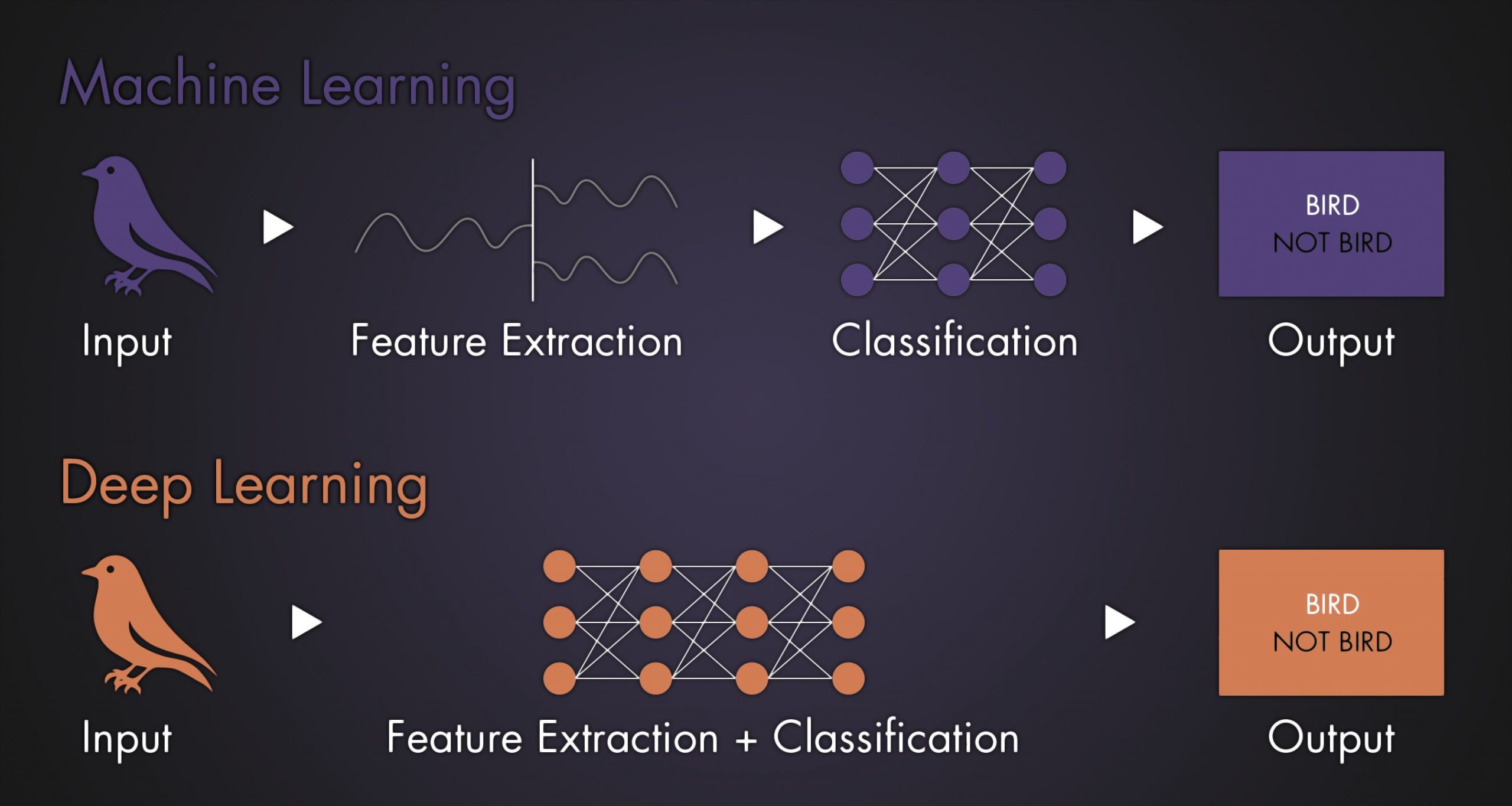

Machine Learning (ML): Automatically learns from input data to create useful outputs with the help of manually (human) specified features.

Deep Learning (DL): One processing model of Machine Learning (one of many), autonomously extracts features.

Artificial Intelligence (AI): Uses the past experience it learned via Machine Learning to creatively get to new outputs.

“All these three buzzwords are often used as synonyms which is not really correct.”

Alright, but how does it work, what does that mean? Here a little example, non-audio related to be easier to understand:

Say you want to analyze pictures and want the application to tell you if the picture is a bird or not.

Machine Learning in general you would feed the machine with tons of pictures of birds. Than you (the programmer) would extract some features that makes it a bird, like this is an eye, here this is feathers, a wing, a bill. These featured will be classified by the machine and on new pictures the machine will have a look to find any or all of these features to specifiy if a new, unseen picture is a bird as well or not.

Deep Learning would work without the manual feature extraction: you feed it with even more pictures of birds and the Neural Network tries to find the features by itself and classifies them at the same process. Now the machine can again tell you, if a new picture is a bird or not (but also nothing else).

Artificial Intelligence could potentially adopt this information to other domains, for example give it now one picture of a cat, an AI machine would try to learn what cats are by itself, adopting what it learned from birds to another topic.

All these three buzzwords are often used as synonyms which is not really correct. You might remember the media hype when Hydra, Junior and Fritz (all three computers) beat the chess masters at that time. Headlines were full of “Artificial Intelligence beat chess master”, however in fact those were Machine Learning algorithms back in the days. They were fed with the rules of chess and the machine calculated the best move in a given scenario before making a move. But if you would have changed the rules just a little bit, by for example adding a row of fields, the machine would not have been able to adopt to that. It would simply make no move at all. Artificial Intelligence on the other hand would be able to adopt to the new rule set and try to learn how to play the “new” game.

The same goes for iZotopes Neuron and Ozone 9. iZotope never stated anything like that, promoting it clearly being Machine Learning. But the media catches the buzz words and creates headlines like “Now Artificial Intelligence handles all the mixing”. There is quite a bit of confusion here.

Does it matter? Probably not really, but it is definitely good to know to understand what this is, not only in the audio business, but in general. Machine Learning, Deep Learning and slowly more and more Artificial Intelligence will play bigger roles in our lives on a daily basis.

The tech is here, everyone uses it, everyone can already access and develop with it. So why not dive into it to make our work easier, to spare some time doing really creative or personal / style decisions instead of basic editing? Or even use this tech to get inspired by creating new audio output that you would probably not have thought of and work from there? The possibilities are endless and it is only now starting to get into our production workflows.

Our most recent audio tool DEBIRD utilizes Deep Learning to automatically remove bird noises from your audio files. Check out the 7 Days Free Trial!

Click here (external link) to read another interesting article about the same topic on www.jelvix.com.